PROXY LIMBS

An Exploration on Trackability in Virtual Reality

Unity / HTC VIVE / KINECT / LEAP MOTION / VIVE TRAKCERS

Group Project with Sohee Woo and Tianlu Tang

Time: 4 weeks

Role: concept building / 3D Modeling / coding

Responsibility and Workflow:

- Concept development : Explored the effects of multi platform tracking experience on both the performance of the VR and the user(actor).- Research : Researched on the current available VR tracking devices and its tracking mechanisms.

- 3D Modelling : Created 3D models and textures in Maya and Unity.

- Coding and prototyping : Accomplished the whole interaction part of the project on Unity(C#).

INTROInspired by the variety of virtual reality systems available today, HyperTracking serves as an exploration and comparison on the effects of the single platform vs. multi platform tracking experience. With the availability of cross-platform tracking it opens up the opportunity to combine specialized systems into one VR experience. ( For example, detailed finger tracking with leap motion in tandem with the full body rigged tracking of the Xbox Kinect.) When these different technologies exist in the same space, it creates a distorted and displaced sense of multiple proxy limbs, in turns opens up the opportunity for catered specialized skill performance and interaction, and a more autonomous VR experience.

RESEARCH

Different platforms/devices allow for different tracking fidelity. These platforms/devices include: HTC Vive Lighthouse, Vive Headset, Vive controller, Vive extra tracker, Leap Motion, Kinect xbox360.

How they would affect each other’s tracking performance and thus affect the performance of the user?

How would it change the perception of self in the digital experience? To begin, we used the method rapid prototyping and thinking through making to exlore the implication of tracking and resolution in VR under different contexts. PROTOTYPES

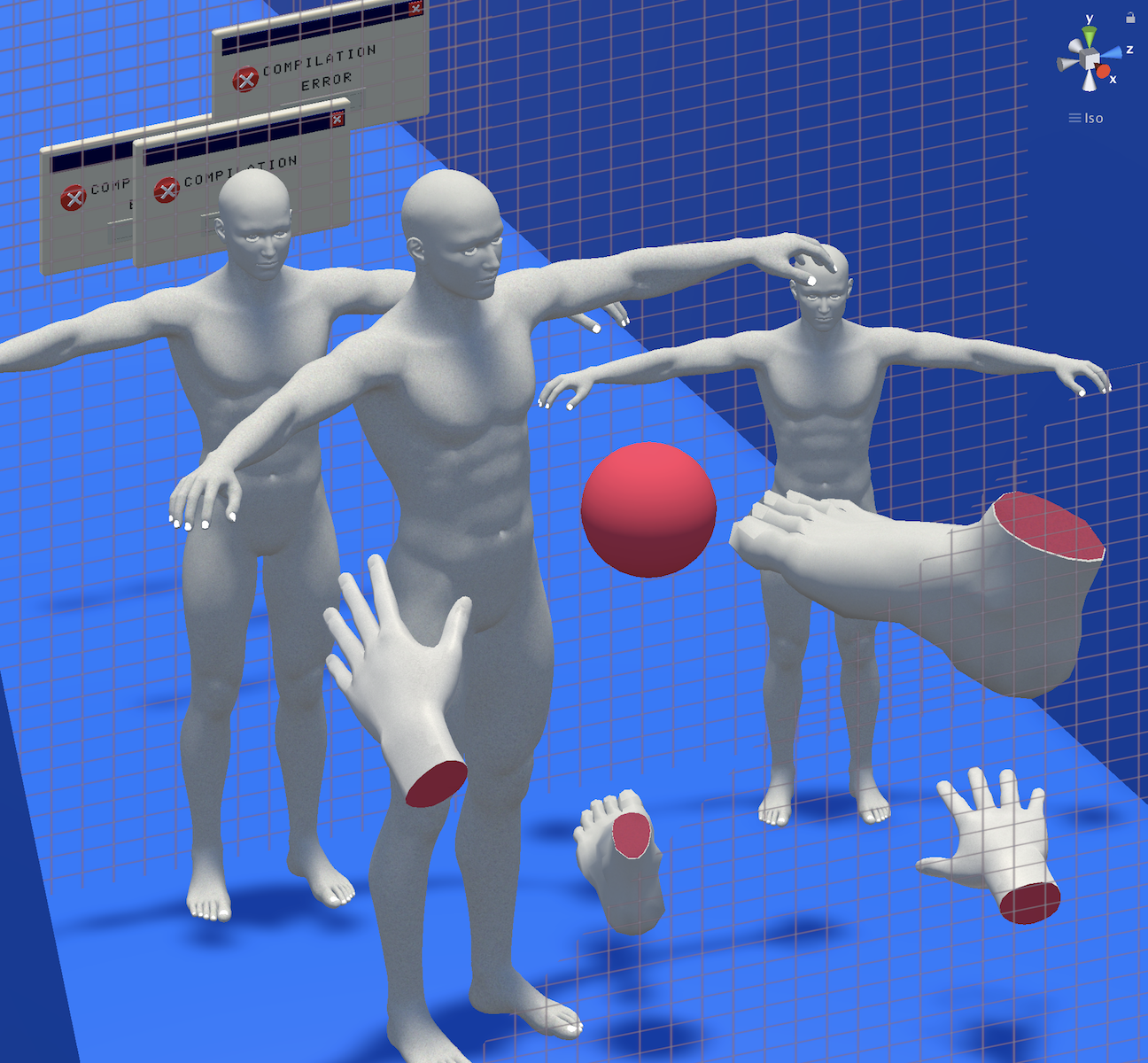

First Prototyping

In this prototype, we explored the the influence of the number of lighthouses on trackabilty in VR. We used hands in different fidelity(from skeleton, polygon, to detailed hand) as a metaphor to represent different level of rendering and trackability in VR. We also used light cones in the scene to represent VR outside-in laser lighthouses(the VR lighthouse uses non-visible light emitters to track objects).

Second Prototyping

In this prototype, we explored how different devices with same tracking technique can affect each other both in ways of functionality and being able to tracked in VR. The video shows an example of the living room, how the remote control, in the environment of everything is being tracked in VR is being affected by this outside-in tracking technology(both remote control and lighthouse uses the the same tracking technique, emitters, to trigger objects). In the video, it illustrates using remote control in real life and VR online at the same time could cause glitch in either technique/devices.

Third Prototype

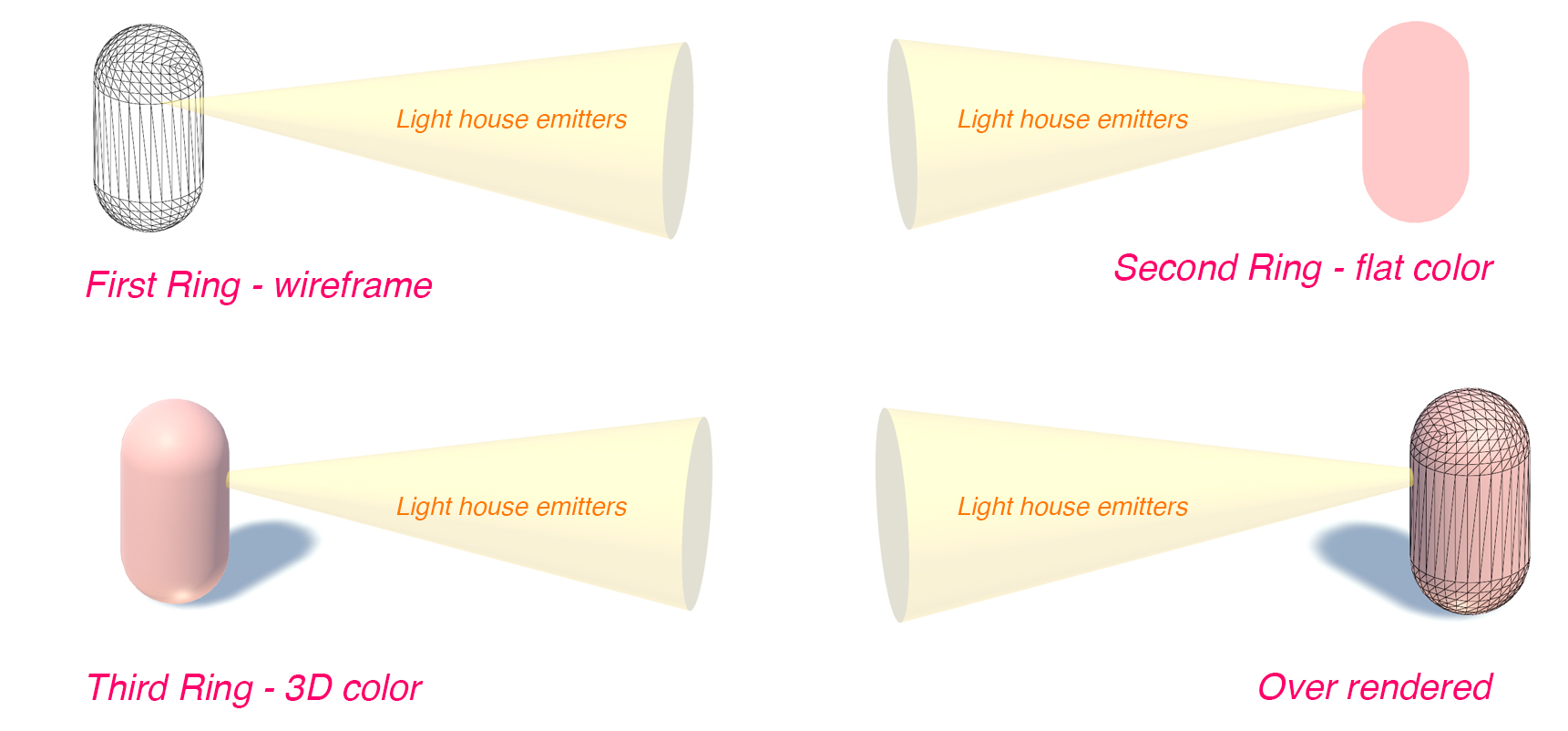

In this exploration, similar as the first one, we used resolution to show trackability in VR, but this time, we set the context in a much larger urban scenes to add more complex interaction and variables to explore with. Referencing

the Concentric zone model,we structured the urban space into rings, simulating a city structure accomodating VR technology. The outer ring is the least tracking area(least number of lighthouses) while the inner is the highest(most number of lighthouses). We also set some random agents to observe their behaviors and resolution changes while going in and out of three rings.

In this scenario, the different levels of resolution are: wireframe(outer ring), flat color(second ring), color with materials(third ring), color with materials plus wireframe (over-rendered).

In this scenario, user is able to experience different trackability levels in an urban space and interacting with agents which are represented as citizens. Referencing the Concentric zone model, this is a starting point for studying the influence of trackability on social distribution of a space in VR. Final Prototype

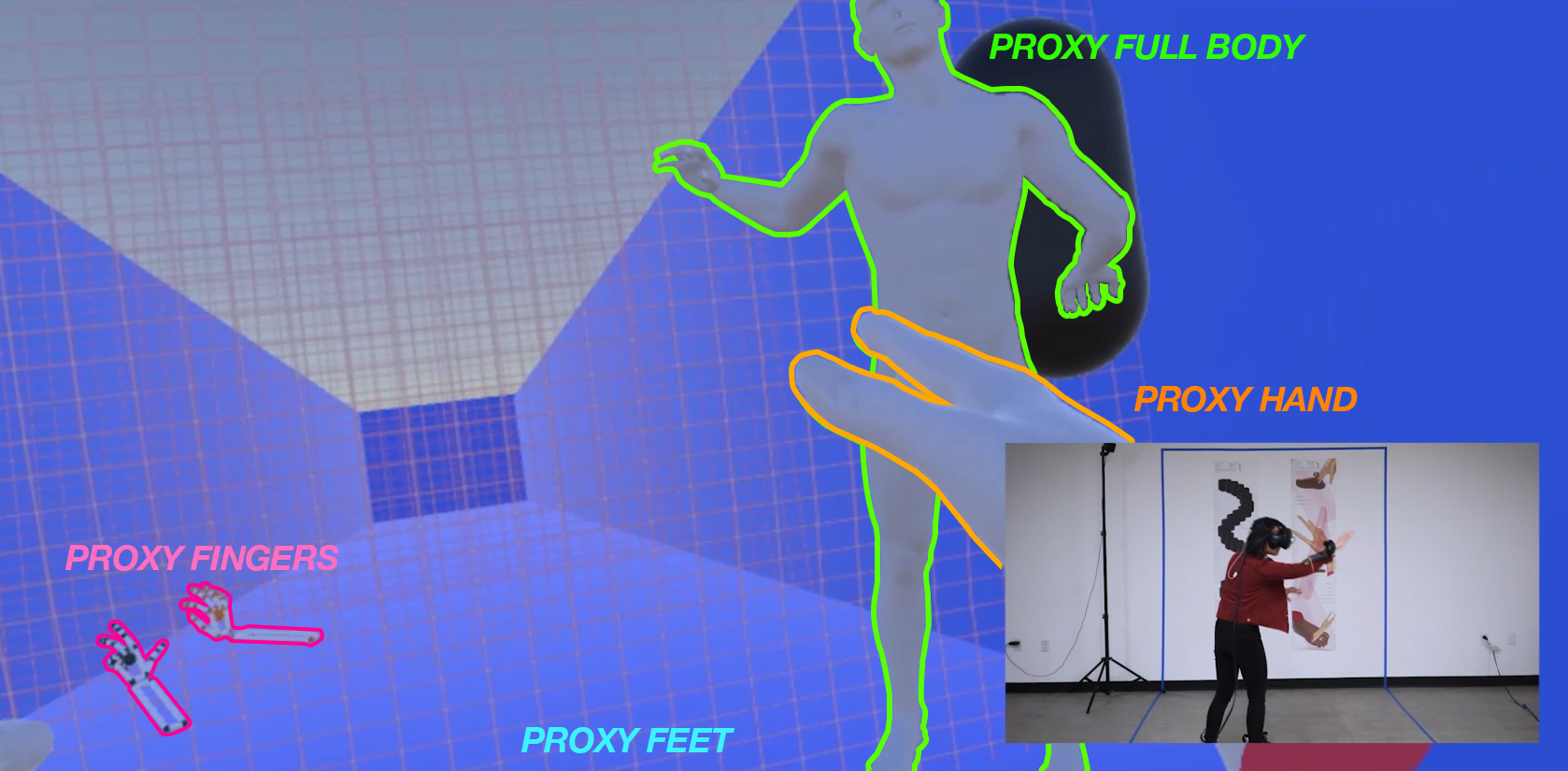

In this final production, we combined our insights from previous explorations on VR tracking.

Tracking in virtual reality utilizes sensors to deduct one’s position in space leaving the impressions of real time movement in a virtual environment. Using the HTC Vive as a case study, the more trackers available the more 360 degree tracking coverage you have, resulting in a higher resolution of one’s virtual self. HyperTracking, creates a visual experience illustrating what it means to be more actualized via more tracking in the VR world.

In comparison, cross-platform tracking creates more specialized possibilities as well as a warped sense of self. HyperTracking utilizes HTC vive controllers and trackers, Xbox Kinect, and Leap Motion to deliver different versions of one’s self in the same space. You are expected to relearn and redefine how to move and function when one’s body parts are no longer the direct reflection of reality.

A warped sense of self —> the “hyper tracking” space and scaling, warping of proxy limbs enables a confusing removed sense of self. This creates a unique experience where you can only achieve in the VR space, it also brings opportunity for new user-digital interaction experience.

A warped sense of self —> the “hyper tracking” space and scaling, warping of proxy limbs enables a confusing removed sense of self. This creates a unique experience where you can only achieve in the VR space, it also brings opportunity for new user-digital interaction experience.Reflection and further questions:

Rapid prototyping and thinking through making are an effective way to quickly and broadly learn about and explore possibilities of a technology or product. Each prototype has potential to be a brief of a research project. In this exploration, I worked with 3 different platforms in 1 digital environment, there are 7 different possibilities of using them together or seperatly to create varies effects and interaction. In this case, how, in specific, would different combination of platforms enhance or decrease user’s the digital experience ? What new forms of interaction can be generated?